Build a backend and client from scratch

This guide walks through building the full Conversational AI stack from scratch: a server that issues tokens and manages agent sessions, and a browser client that captures microphone audio and streams live transcripts. You will end up with the same structure as the official Next.js and Python starter repos, but with every step explained.

If you want to hear an agent speak in under five minutes, follow the Voice AI quickstart guide.

What you will build

A complete client-server app:

-

Backend: Three HTTP endpoints:

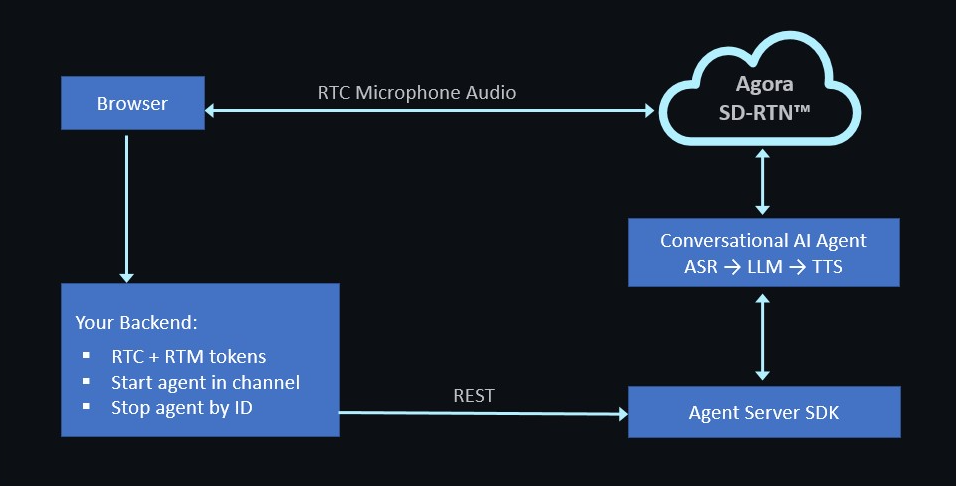

POST /api/token: Issues RTC + RTM tokens for a given channel and UIDPOST /api/invite-agent: Starts the agent using the Agent Server SDKPOST /api/stop-conversation: Stops the agent by ID

-

Frontend: A Next.js page that:

- Requests microphone permission

- Joins the Agora channel using the RTC SDK

- Fetches a token from your backend

- Calls

invite-agentto bring the agent into the channel - Renders live transcripts from RTM

- Calls

stop-conversationon page unload

The architecture looks like this:

The backend never touches audio, and the browser never embeds your App Certificate. This clean separation is the reason you need a backend.

Prerequisites

- An active Agora account.

gitand a terminal.- One of the following language runtimes:

- Node.js 20 LTS or later with

pnpm(TypeScript) - Python 3.11 or later with

uvorpip - Go 1.22 or later

- Node.js 20 LTS or later with

- A modern browser with microphone access

Set up your environment

This section walks you through installing the Agora CLI and scaffolding your project.

Install the Agora CLI

The Agora CLI is the recommended way to bootstrap a new Agora project. Use it to create

projects, enable features, write credentials to .env files, and run diagnostics.

To install the Agora CLI, log in, and create a project with Conversational AI enabled:

Confirm that the CLI can read your credentials:

Keep this terminal open. You will reuse these credentials in both the backend and frontend steps.

Scaffold the repo

Select the tab for your preferred language.

- TypeScript

- Python

- Go

For TypeScript, the backend and frontend live in the same Next.js app.

-

Scaffold a Next.js app with TypeScript, the App Router, Tailwind CSS, and ESLint, then install the required Agora packages:

agora-agent-server-sdk: Starts and stops agent sessions from the backendagora-rtc-sdk-ng: Handles mic capture and RTC channel joining in the browseragora-rtm-sdk: Receives live transcript messages from the agentagora-token: Generates RTC and RTM tokens

-

Write your Agora credentials to

.env.local:The

NEXT_PUBLIC_prefix makesAGORA_APP_IDavailable in the browser, which is required to join the RTC channel. Never apply this prefix toAPP_CERTIFICATE; it must remain server-side only.

For Python, the backend and frontend are separate apps in a single monorepo.

-

Scaffold the monorepo, create a Python virtual environment, and install the required packages:

agora-agent-server-sdk: Starts and stops agent sessions from the backendagora-token-builder: Generates RTC and RTM tokensagora-rtc-sdk-ng: Handles mic capture and RTC channel joining in the browseragora-rtm-sdk: Receives live transcript messages from the agent

-

Write your Agora credentials to the backend and frontend environment files:

The backend reads

APP_IDandAPP_CERTIFICATEdirectly. The frontend only receivesAPP_IDthroughNEXT_PUBLIC_AGORA_APP_ID. The certificate never leavesserver-python/.

The Go backend walkthrough is not yet available. It will mirror the Python path: a standalone backend on port 8000, a Next.js web client on port 3000, and the same three HTTP endpoints.

In the meantime, use the Voice AI quickstart for a Go agent walkthrough that runs as a single process without a frontend.

Build the backend

The backend exposes three endpoints, one for each operation the frontend needs.

- TypeScript

- Python

- Go

Generate tokens

Endpoint: POST /api/token

This endpoint builds an RTC token and an RTM token for the browser client. It is the only place the App Certificate is used.

Start an agent session

Endpoint: POST /api/invite-agent

This endpoint uses the Agent Server SDK to configure an agent and start a session. The STT, LLM, and TTS configurations use Agora-managed presets and therefore do not require an apiKey.

Stop an agent session

Endpoint: POST /api/stop-conversation

This endpoint stops a running agent session by ID.

All backend code lives in server-python/main.py.

Generate tokens

Endpoint: POST /api/token

This endpoint generates an RTC token and an RTM token for the browser client. It is the only place the App Certificate is used.

Start an agent session

Endpoint: POST /api/invite-agent

This endpoint uses the Agent Server SDK to configure an agent and start a session in the

caller's channel. The STT, LLM, and TTS configurations use Agora-managed presets and

therefore do not require an api_key.

Add the following to main.py:

Stop an agent session

Endpoint: POST /api/stop-conversation

This endpoint stops a running agent session by ID.

Add the following to main.py:

To start the backend:

Swagger docs are available at http://localhost:8000/docs.

The Go backend walkthrough is not yet available. It will mirror the Python path: a standalone backend on port 8000, a Next.js web client on port 3000, and the same three HTTP endpoints. In the meantime, see the Voice AI quickstart guide to get started with Go.

Build the frontend

The frontend is the same Next.js app for all three backends. The only difference is whether it calls its own API routes (TypeScript) or a separate backend on port 8000 (Python and Go).

A basic API client

Create lib/api.ts to give the frontend a single place to manage the backend URL and

endpoint calls.

For TypeScript, NEXT_PUBLIC_BACKEND_URL is not set in .env.local, so calls go

to same-origin routes like /api/token. For Python and Go, it is set to

http://localhost:8000 in the scaffold step, so calls go to the external backend.

Create the RTC and RTM hook

Create hooks/useConvoAgent.ts. This hook joins the RTC channel for audio, connects to

RTM for transcripts, and exposes a start() and stop() function to the UI.

The hook follows the same structure as components/ConversationComponent.tsx in the

Next.js starter repo.

Build the client UI

Create app/page.tsx as the main UI. It renders a start/stop button and a live

transcript list.

The page has no state library or design system. It provides just enough UI to verify that the backend is working.

Handle page unload (optional)

Add this effect inside app/page.tsx to stop the agent cleanly when the user closes

the tab, rather than waiting for the 30-second idle timeout.

Test and validate

Start the app and verify that the agent joins, responds, and stops cleanly.

Run the app

- TypeScript

- Python

- Go

Open http://localhost:3000, click Start conversation, allow microphone access, and speak.

Start the backend and frontend in two separate terminals:

Open http://localhost:3000.

The Go walkthrough is not yet available. See the Voice AI quickstart guide in the meantime.

Verify the integration

A healthy run passes all three checks:

| Check | How to verify | Time budget |

|---|---|---|

| Agent joined the channel | The invite-agent response resolves with an agentId, and the agent emits a greeting in RTC within two seconds. | < 2 s |

| Transcripts stream | transcripts state updates as you speak; partial lines are marked final: false. | < 500 ms partial latency |

| Stop is clean | After Stop, the backend returns { stopped: true }, and the Convo AI engine logs STATE=STOPPED, reason=API. | Immediate |

If you run into problems, first run the CLI diagnostic:

This checks for credential errors, feature-enablement issues, and network reachability problems.

Troubleshooting

| Symptom | Likely cause | Fix |

|---|---|---|

Error: /api/token failed: 500 in the browser | Backend cannot read the APP_CERTIFICATE environment variable. | Confirm .env.local (TypeScript) or server-python/.env (Python) contains the variable and that the server loaded the file on startup. |

invalid token from the RTC join | Clock skew between token generation and channel join. | RTC tokens are time-sensitive. Regenerate a token on each start() call to avoid expiry issues. |

Agent never speaks but agentId is returned | Conversational AI feature not enabled on the Agora project. | Run agora project feature list. If convoai is missing, rerun agora project create --feature convoai or enable it in the Agora Console. |

| No transcripts in RTM | enable_rtm not set, or data_channel is set to stream instead of rtm. | Confirm advancedFeatures.enable_rtm: true and parameters.data_channel: 'rtm' in the agent config. |

| CORS error in the browser (Python) | FastAPI CORS middleware does not include your frontend origin. | Add http://localhost:3000 to allow_origins in main.py. |

| Agent greets itself in a loop | No echo cancellation on the device. | Use headphones, or set parameters.enable_aec: true. |

unauthorized error on agora login in CI | SSO browser flow cannot open on a headless machine. | Use agora login --device for the device-code flow. |

| Chrome blocks microphone access | getUserMedia is not available on non-localhost HTTP origins. | Test on http://localhost:3000 exactly, not http://127.0.0.1 or a LAN IP. |

Next steps

Now that you have a working agent, explore the following topics:

- Integrate an MLLM: Replace the cascading STT → LLM → TTS pipeline with a single realtime model.

- Transmit custom information: Guide the agent with user-specific context to personalize responses.

- Integrate short-term memory: Help the agent maintain context across a conversation.

- Receive webhook notifications: Receive agent event notifications in real time.

- Use filler words: Reduce perceived latency by filling silence during LLM processing.

- Optimize conversation latency: Tune LLM, ASR, and TTS components for lower end-to-end latency.