Screen sharing enables the host of an interactive live streaming broadcast or video call to display what is on their screen to other users in the channel. This technology has many obvious advantages for communicating information, particularly in the following scenarios:

- During video conferencing, the speaker can share a local image, web page, or full presentation with other participants.

- For online instruction, the teacher can share slides or notes with students.

This section describes how to implement screen sharing using the Android SDK v3.7.0 and later.

Before you begin, ensure that you understand how to start a video call or start interactive video streaming. For details, see Start a Video Call or Start Interactive Video Streaming.

-

Copy the AgoraScreenShareExtension.aar file of the SDK to the /app/libs/ directory.

-

Add the following line in the dependencies node in the /app/build.gradle file to support importing files in the AAR format.

_1implementation fileTree(dir: "libs", include: ["*.jar","*.aar"])

Call startScreenCapture to start screen sharing.

Agora provides an open-source sample project on GitHub for your reference.

Some restrictions and cautions exist for using the screen sharing feature, and charges can apply. Agora recommends that you read the following API references before calling the API:

This section describes how to implement screen sharing using the Android SDK earlier than v3.7.0.

Before you begin, ensure that you understand how to start a video call or start interactive video streaming. For details, see Start a Video Call or Start Interactive Video Streaming.

The Agora SDK does not provide any method for screen share on Android. Therefore, You need to implement this function using the native screen-capture APIs provided by Android, and the custom video-source APIs provided by Agora.

- Use

android.media.projection and android.hardware.display.VirtualDisplay to get and pass the screen-capture data.

- Create an OpenGL ES environment. Create a

SurfaceView object and pass the object to VirtualDisplay, which works as the recipient of the screen-capture data.

- You can get the screen-capture data from the callbacks of

SurfaceView. Use either the Push mode or mediaIO mode to push the screen-capture data to the SDK. For details, see Custom Video Source and Renderer.

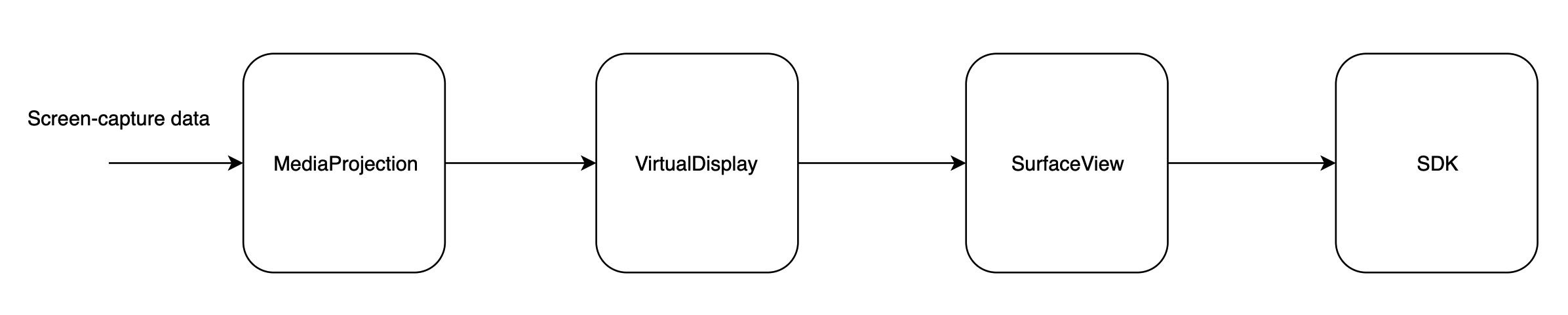

The following diagram shows how data is transferred during screen sharing on Android:

The code samples provided in this section use

MediaProjection and

VirtualDisplay APIs provided by Android and have the following Android/API version requirements:

- The Android version must be Lollipop or higher.

- To use

MediaProjection APIs, the Android API level must be 21 or higher.

- To use

VirtualDisplay APIs, the Android API level must be 19 or higher.

For detailed usage and considerations, see the Android documentation

MediaProjection and

VirtualDisplay.

-

Implement IVideoSource and IVideoFrameConsumer, and rewrite the callbacks in IVideoSource.

_42// Implements the IVideoSource interface

_42public class ExternalVideoInputManager implements IVideoSource {

_42 // Gets the IVideoFrameConsumer object when initializing the video source

_42 public boolean onInitialize(IVideoFrameConsumer consumer) {

_42 mConsumer = consumer;

_42 public boolean onStart() {

_42 public void onStop() {

_42 // Sets IVideoFrameConsumer as null when IVideoFrameConsumer is released by the media engine

_42 public void onDispose() {

_42 Log.e(TAG, "SwitchExternalVideo-onDispose");

_42 public int getBufferType() {

_42 return TEXTURE.intValue();

_42 public int getCaptureType() {

_42 public int getContentHint() {

_42 return MediaIO.ContentHint.NONE.intValue();

_2// Implements IVideoFrameConsumer

_2private volatile IVideoFrameConsumer mConsumer;

-

Set the custom video source before joining a channel.

_24// Sets the input thread of the custom video source

_24// In the sample project, we use the class in the open-source grafika project, which encapsulates the graphics architecture of Android. For details, see https://source.android.com/devices/graphics/architecture

_24// For detailed implementation of EglCore, GlUtil, EGLContext, and ProgramTextureOES, see https://github.com/google/grafika

_24// The GLThreadContext class contains EglCore, EGLContext, and ProgramTextureOES

_24private void prepare() {

_24 // Creates an OpenEL ES environment based on EglCore

_24 mEglCore = new EglCore();

_24 mEglSurface = mEglCore.createOffscreenSurface(1, 1);

_24 mEglCore.makeCurrent(mEglSurface);

_24 // Creates an EGL texture object based on GlUtil

_24 mTextureId = GlUtil.createTextureObject(GLES11Ext.GL_TEXTURE_EXTERNAL_OES);

_24 // Creates a SurfaceTexture object based on EGL texture

_24 mSurfaceTexture = new SurfaceTexture(mTextureId);

_24 // Surface a Surface object based on SurfaceTexture

_24 mSurface = new Surface(mSurfaceTexture);

_24 // Pass EGLCore, EGL context, and ProgramTextureOES to GLThreadContext as its members

_24 mThreadContext = new GLThreadContext();

_24 mThreadContext.eglCore = mEglCore;

_24 mThreadContext.context = mEglCore.getEGLContext();

_24 mThreadContext.program = new ProgramTextureOES();

_24 // Sets the custom video source

_24 ENGINE.setVideoSource(ExternalVideoInputManager.this);

-

Create an intent based on MediaProjection, and pass the intent to the startActivityForResult() method to start capturing screen data.

_14private class VideoInputServiceConnection implements ServiceConnection {

_14 public void onServiceConnected(ComponentName componentName, IBinder iBinder) {

_14 mService = (IExternalVideoInputService) iBinder;

_14// Starts capturing screen data. Ensure that your Android version must be Lollipop or higher.

_14if (android.os.Build.VERSION.SDK_INT >= android.os.Build.VERSION_CODES.LOLLIPOP) {

_14 // Instantiates a MediaProjectionManager object

_14 MediaProjectionManager mpm = (MediaProjectionManager)

_14 getContext().getSystemService(Context.MEDIA_PROJECTION_SERVICE);

_14 Intent intent = mpm.createScreenCaptureIntent();

_14 // Starts screen capturing

_14 startActivityForResult(intent, PROJECTION_REQ_CODE);

-

Get the screen-capture data information from the activity result.

_14// Gets the intent of the data information from activity result

_14 public void onActivityResult(int requestCode, int resultCode, @Nullable Intent data) {

_14 super.onActivityResult(requestCode, resultCode, data);

_14 if (requestCode == PROJECTION_REQ_CODE && resultCode == RESULT_OK) {

_14 // Sets the custom video source as the screen-capture data

_14 mService.setExternalVideoInput(ExternalVideoInputManager.TYPE_SCREEN_SHARE, data);

_14 catch (RemoteException e) {

The implementation of setExternalVideoInput(int type, Intent intent) is as follows:

_29// Gets the parameters of the screen-capture data from intent

_29boolean setExternalVideoInput(int type, Intent intent) {

_29 if (mCurInputType == type && mCurVideoInput != null

_29 && mCurVideoInput.isRunning()) {

_29 IExternalVideoInput input;

_29 case TYPE_SCREEN_SHARE:

_29 // Gets the screen-capture data from the intent of MediaProjection

_29 int width = intent.getIntExtra(FLAG_SCREEN_WIDTH, DEFAULT_SCREEN_WIDTH);

_29 int height = intent.getIntExtra(FLAG_SCREEN_HEIGHT, DEFAULT_SCREEN_HEIGHT);

_29 int dpi = intent.getIntExtra(FLAG_SCREEN_DPI, DEFAULT_SCREEN_DPI);

_29 int fps = intent.getIntExtra(FLAG_FRAME_RATE, DEFAULT_FRAME_RATE);

_29 Log.i(TAG, "ScreenShare:" + width + "|" + height + "|" + dpi + "|" + fps);

_29 // Instantiates a ScreenShareInput class using the screen-capture data

_29 input = new ScreenShareInput(context, width, height, dpi, fps, intent);

_29 // Sets the captured video data as the ScreenShareInput object, and creates an input thread for external video data

_29 setExternalVideoInput(input);

_29 mCurInputType = type;

-

During the initialization of the input thread of the external video data, create a VirtualDisplay object with MediaProjection, and render VirtualDisplay on SurfaceView.

_15public void onVideoInitialized(Surface target) {

_15 MediaProjectionManager pm = (MediaProjectionManager)

_15 mContext.getSystemService(Context.MEDIA_PROJECTION_SERVICE);

_15 mMediaProjection = pm.getMediaProjection(Activity.RESULT_OK, mIntent);

_15 if (mMediaProjection == null) {

_15 Log.e(TAG, "media projection start failed");

_15 // Creates VirtualDisplay with MediaProjection, and render VirtualDisplay on SurfaceView

_15 mVirtualDisplay = mMediaProjection.createVirtualDisplay(

_15 VIRTUAL_DISPLAY_NAME, mSurfaceWidth, mSurfaceHeight, mScreenDpi,

_15 DisplayManager.VIRTUAL_DISPLAY_FLAG_PUBLIC, target,

-

Use SurfaceView as the custom video source. After the user joins the channel, the custom video module gets the screen-capture data using consumeTextureFrame in ExternalVideoInputThread and passes the data to the SDK.

_34 // Calls updateTexImage() to update the data to the texture object of OpenGL ES

_34 // Calls getTransformMatrix() to transform the texture matrix

_34 mSurfaceTexture.updateTexImage();

_34 mSurfaceTexture.getTransformMatrix(mTransform);

_34 catch (Exception e) {

_34 // Gets the screen-capture data from onFrameAvailable. onFrameAvailable is a rewrite of ScreenShareInput, which gets information such as the texture ID and transform information

_34 // No need to render the screen-capture data on the local view

_34 if (mCurVideoInput != null) {

_34 mCurVideoInput.onFrameAvailable(mThreadContext, mTextureId, mTransform);

_34 mEglCore.makeCurrent(mEglSurface);

_34 GLES20.glViewport(0, 0, mVideoWidth, mVideoHeight);

_34 if (mConsumer != null) {

_34 Log.e(TAG, "SDK encoding->width:" + mVideoWidth + ",height:" + mVideoHeight);

_34 // Calls consumeTextureFrame to pass the video data to the SDK

_34 mConsumer.consumeTextureFrame(mTextureId,

_34 TEXTURE_OES.intValue(),

_34 mVideoWidth, mVideoHeight, 0,

_34 System.currentTimeMillis(), mTransform);

_34 // Waits for the next frame

Agora provides an open-source sample project on GitHub to show how to switch the video between screen sharing and the camera.

Currently, the Agora Video SDK for Android supports creating only one RtcEngine instance per app. You need multi-processing to send video from screen sharing and the local camera at the same time.

You need to create separate processes for screen sharing and video captured by the local camera. Both processes use the following methods to send video data to the SDK:

- Local-camera process: Implemented with

joinChannel. This process is often the main process and communicates with the screen sharing process via AIDL (Android Interface Definition Language). See Android documentation to learn more about AIDL.

- Screen sharing process: Implemented with

MediaProjection, VirtualDisplay, and custom video capture. The screen sharing process creates an RtcEngine object and uses the object to create and join a channel for screen sharing.

The users of both processes must join the same channel. After joining a channel, a user subscribes to the audio and video streams of all other users in the channel by default, thus incurring all associated usage costs. Because the screen sharing process is only used to publish the screen sharing stream, you can call muteAllRemoteAudioStreams(true) and muteAllRemoteVideoStreams(true) to mute remote audio and video streams.

The following sample code uses multi-processing to send the video from the screen sharing video and locally captured video. AIDL is used to communicate between the processes.

-

Configure android:process in AndroidManifest.xml for the components.

_14 android:name=".impl.ScreenCapture$ScreenCaptureAssistantActivity"

_14 android:process=":screensharingsvc"

_14 android:screenOrientation="fullUser"

_14 android:theme="@android:style/Theme.Translucent" />

_14 android:name=".impl.ScreenSharingService"

_14 android:process=":screensharingsvc">

_14 <action android:name="android.intent.action.screenshare" />

-

Create an AIDL interface, which includes methods to communicate between processes.

_13// Includes methods to manage the screen sharing process

_13// IScreenSharing.aidl

_13package io.agora.rtc.ss.aidl;

_13import io.agora.rtc.ss.aidl.INotification;

_13interface IScreenSharing {

_13 void registerCallback(INotification callback);

_13 void unregisterCallback(INotification callback);

_13 void renewToken(String token);

_8// Includes callbacks to receive notifications from the screen sharing process

_8package io.agora.rtc.ss.aidl;

_8interface INotification {

_8 void onError(int error);

_8 void onTokenWillExpire();

-

Implement the screen sharing process. Screen sharing is implemented with MediaProjection, VirtualDisplay, and custom video source. The screen sharing process creates an RtcEngine object and uses the object to create and join a channel for screen sharing.

_61// Define the ScreenSharingClient object

_61public class ScreenSharingClient {

_61 private static final String TAG = ScreenSharingClient.class.getSimpleName();

_61 private static IScreenSharing mScreenShareSvc;

_61 private IStateListener mStateListener;

_61 private static volatile ScreenSharingClient mInstance;

_61 public static ScreenSharingClient getInstance() {

_61 if (mInstance == null) {

_61 synchronized (ScreenSharingClient.class) {

_61 if (mInstance == null) {

_61 mInstance = new ScreenSharingClient();

_61// Start screen sharing

_61public void start(Context context, String appId, String token, String channelName, int uid, VideoEncoderConfiguration vec) {

_61 if (mScreenShareSvc == null) {

_61 Intent intent = new Intent(context, ScreenSharingService.class);

_61 intent.putExtra(Constant.APP_ID, appId);

_61 intent.putExtra(Constant.ACCESS_TOKEN, token);

_61 intent.putExtra(Constant.CHANNEL_NAME, channelName);

_61 intent.putExtra(Constant.UID, uid);

_61 intent.putExtra(Constant.WIDTH, vec.dimensions.width);

_61 intent.putExtra(Constant.HEIGHT, vec.dimensions.height);

_61 intent.putExtra(Constant.FRAME_RATE, vec.frameRate);

_61 intent.putExtra(Constant.BITRATE, vec.bitrate);

_61 intent.putExtra(Constant.ORIENTATION_MODE, vec.orientationMode.getValue());

_61 context.bindService(intent, mScreenShareConn, Context.BIND_AUTO_CREATE);

_61 mScreenShareSvc.startShare();

_61 } catch (RemoteException e) {

_61 Log.e(TAG, Log.getStackTraceString(e));

_61// Stop screen sharing

_61public void stop(Context context) {

_61 if (mScreenShareSvc != null) {

_61 mScreenShareSvc.stopShare();

_61 mScreenShareSvc.unregisterCallback(mNotification);

_61 } catch (RemoteException e) {

_61 Log.e(TAG, Log.getStackTraceString(e));

_61 mScreenShareSvc = null;

_61 context.unbindService(mScreenShareConn);

After binding the screen sharing service, the screen sharing process creates an RtcEngine object and joins the channel for screen sharing.

_10 public IBinder onBind(Intent intent) {

_10 // Creates a RtcEngine object

_10 // Set video encoding configurations

_10 setUpVideoConfig(intent);

When creating the RtcEngine object in the screen sharing process, perform the following configurations:

_6// Mute all audio streams

_6mRtcEngine.muteAllRemoteAudioStreams(true);

_6// Mute all video streams

_6mRtcEngine.muteAllRemoteVideoStreams(true);

_6// Disable the audio module

_6mRtcEngine.disableAudio();

-

Implement the local-camera process and the code logic to start the screen sharing process.

_66public class MultiProcess extends BaseFragment implements View.OnClickListener

_66 private static final String TAG = MultiProcess.class.getSimpleName();

_66 // Define the uid for the screen sharing process

_66 private static final Integer SCREEN_SHARE_UID = 10000;

_66 public void onActivityCreated(@Nullable Bundle savedInstanceState)

_66 super.onActivityCreated(savedInstanceState);

_66 Context context = getContext();

_66 // Create an RtcEngine instance

_66 engine = RtcEngine.create(context.getApplicationContext(), getString(R.string.agora_app_id), iRtcEngineEventHandler);

_66 // Initialize the screen sharing process

_66 mSSClient = ScreenSharingClient.getInstance();

_66 mSSClient.setListener(mListener);

_66 getActivity().onBackPressed();

_66 // Run the screen sharing process and send information such as App ID and channel ID to the screen sharing process

_66 else if (v.getId() == R.id.screenShare){

_66 String channelId = et_channel.getText().toString();

_66 mSSClient.start(getContext(), getResources().getString(R.string.agora_app_id), null,

_66 channelId, SCREEN_SHARE_UID, new VideoEncoderConfiguration(

_66 ORIENTATION_MODE_ADAPTIVE

_66 screenShare.setText(getResources().getString(R.string.stop));

_66 mSSClient.stop(getContext());

_66 screenShare.setText(getResources().getString(R.string.screenshare));

_66 // Create a view for local preview

_66 SurfaceView surfaceView = RtcEngine.CreateRendererView(context);

_66 if(fl_local.getChildCount() > 0)

_66 fl_local.removeAllViews();

_66 int res = engine.joinChannel(accessToken, channelId, "Extra Optional Data", 0);

_66 showAlert(RtcEngine.getErrorDescription(Math.abs(res)));

_66 join.setEnabled(false);

Agora provides an open-source sample project on GitHub to show how to send video from both screen sharing and the camera by using dual processes.